Upcoming high profile elections, including the US Presidential election, are set to take place next year. We have been dedicated to supporting and safeguarding elections across our products for many years and will continue this commitment.

In 2024, we will focus on protecting our platforms, aiding people in making informed decisions, providing high-quality information to voters, and equipping campaigns with top-notch security. We will emphasize the potential role of artificial intelligence (AI) in this endeavor. While AI offers new opportunities, it also presents challenges. Our AI models will not only enhance our efforts to combat abuse but also help us enforce our policies more effectively on a larger scale. However, we are also preparing for how AI can impact the spread of misinformation. Here’s how we are approaching these new challenges.

Safeguarding our Platforms from Abuse

In recent years, we have supported numerous elections globally and continually integrate new learnings to enhance our protections against harmful content, ensuring trustworthy experiences. To protect our platforms, we have established long-standing policies that guide our approach to addressing manipulated media, hate and harassment, incitement to violence, and demonstrably false claims that could undermine democratic processes. For over a decade, we have utilized machine learning classifiers and AI to identify and remove policy-violating content. With the advancements in our Large Language Models (LLMs), we are experimenting with building faster and more adaptable enforcement systems. Early results suggest that this will enable us to take action even quicker when new threats emerge.

We are also taking a principled and responsible approach to introducing generative AI products, prioritizing testing for safety risks ranging from cybersecurity vulnerabilities to misinformation and fairness. In preparation for the 2024 elections, we will restrict the types of election-related queries for which Bard and SGE will return responses, exercising caution in addressing this crucial topic.

Helping People Identify AI-Generated Content

To assist people in distinguishing AI-generated media that may seem realistic, we have introduced several new tools and protections, including ads disclosures, content labels, additional context, and digital watermarking.

Surfacing High-Quality Information to Voters

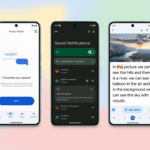

During elections, people seek information on candidates, voter registration deadlines, polling locations, and more. We facilitate easy access to this information through various means such as search, news, YouTube, and maps to connect people to high-quality election news and information.

Partnering to Equip Campaigns with Best-in-Class Security

Elections come with heightened cybersecurity risks. Our Advanced Protection Program is available to various high-risk individuals, and in collaboration with Defending Digital Campaigns (DDC), we provide campaigns with the necessary security tools to stay safe online. Through our Campaign Security Project, we have trained thousands of campaign and election officials in digital security best practices. Additionally, our Threat Analysis Group (TAG) and Mandiant Intelligence help identify, monitor, and address emerging threats, ensuring public and private sector awareness and vigilance.