Unveiling Gemini 1.5

Written by Demis Hassabis, CEO of Google DeepMind, on behalf of the Gemini team

The world of artificial intelligence is at an exciting juncture. Recent developments in this field have the potential to greatly enhance the usefulness of AI for billions of people in the years to come. Since the introduction of Gemini 1.0, we’ve been rigorously testing, refining, and improving its capabilities.

Today, we are thrilled to announce our latest offering: the next-generation model, Gemini 1.5.

Gemini 1.5 brings about significantly improved performance, representing a major leap in our approach. This advancement is built upon extensive research and engineering innovations that span nearly every aspect of our model development and infrastructure. Notably, Gemini 1.5 is now more efficient to train and deploy, thanks to a new Mixture-of-Experts (MoE) architecture.

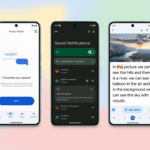

The first iteration of Gemini 1.5 that we are releasing for early testing is Gemini 1.5 Pro. This mid-size multimodal model is designed for scalability across a wide range of tasks and delivers performance comparable to that of 1.0 Ultra, our largest model to date. It also features an experimental breakthrough in long-context understanding.

Gemini 1.5 Pro comes with a standard 128,000 token context window. However, as of today, a select group of developers and enterprise customers can experiment with a context window of up to 1 million tokens via AI Studio and Vertex AI in a private preview.

As we introduce the full 1 million token context window, we are actively working on optimizations to enhance latency, reduce computational requirements, and improve the overall user experience. We are thrilled for users to experience this groundbreaking capability, and we will provide further details on its general availability in the near future.

These ongoing advancements in our next-generation models will unlock fresh opportunities for individuals, developers, and enterprises to create, explore, and innovate using AI.